Persuasive Privacy

Chalmers University of Technology, 5th June 2026

Adelaide University

Today’s talk

- Differential privacy: de facto

- Privacy from the ground up: semantics

- Persuasive privacy: not coercion

- Differential privacy: redux

- Privacy without noise (?)

Bon, Bailie, Rousseau & Robert (2026) ICML 2026

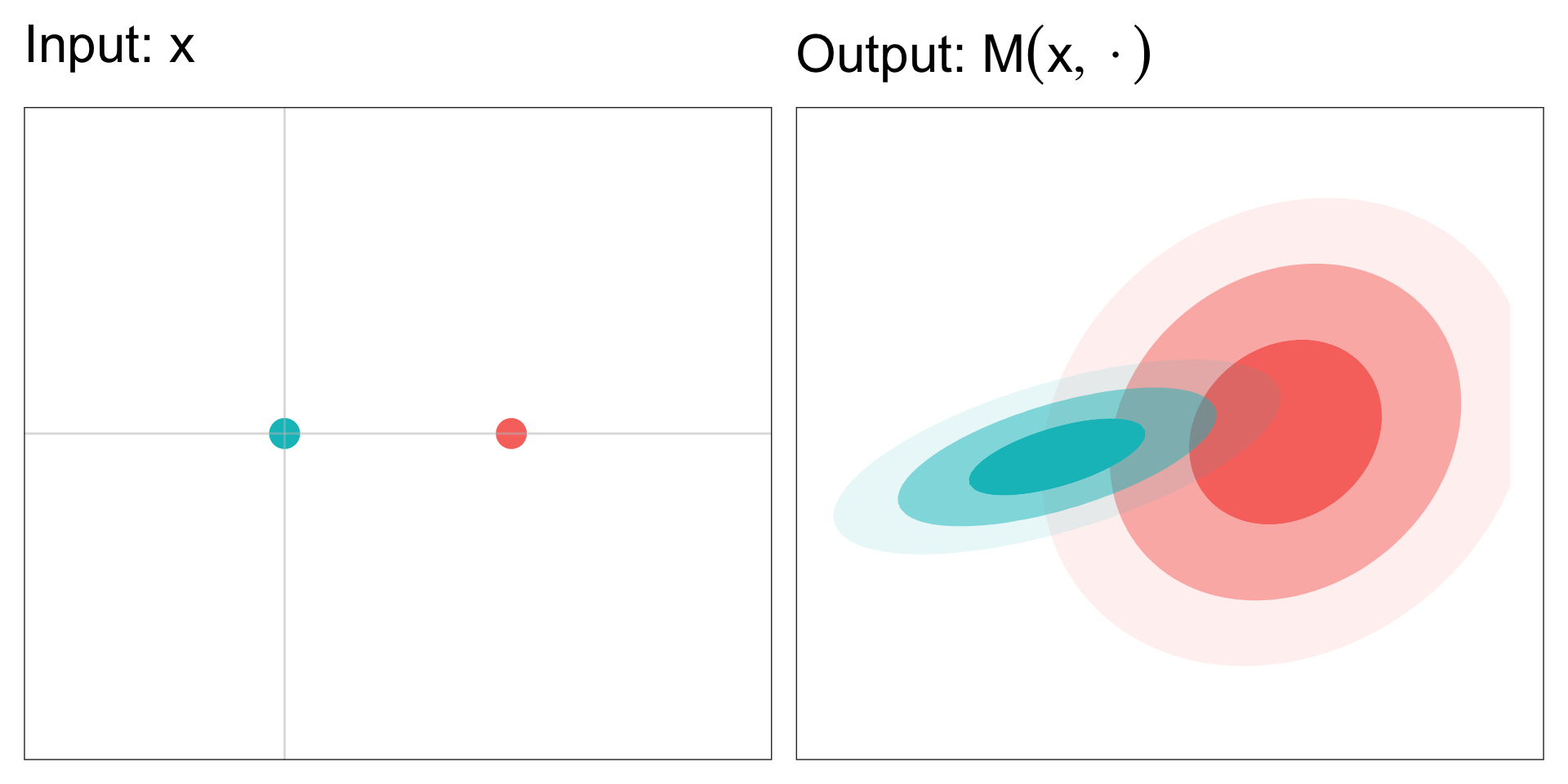

Differential privacy

DP framework

\text{Dataset}~x \overset{\text{release}}{\longrightarrow} \text{statistic}~T

- T \sim M(x,\cdot), with some mechanism M

Example

- T = \frac{1}{n}\sum_{i=1}^{n} x_i + Z with Z \sim \mathcal{N}(0,\sigma^2)

M(x,\cdot) = \mathcal{N}(\overline{x},\sigma^2)

DP Intuition

To limit information leaked about data x by statistic T,

control the sensitivity of the mechanism M(x,\cdot) w.r.t. x.

DP Definition

Borrow from Lipschitz continuity (Bailie and Gong 2024).

d_\text{P}[M(x,\cdot),M(x^\prime,\cdot)] \leq \epsilon~d_\text{X}[x,x^\prime]

for all x,x^\prime \in \mathcal{D}.

DP Definition

A mechanism M is \epsilon-DP if

\sup_{S\in\mathcal{S}}\left\vert\ln\frac{M(x,S)}{M(x^\prime,S)}\right\vert \leq \epsilon

for all x,x^\prime \in \mathcal{D}, differing by at most one element.

Pure Differential Privacy

M(x,S) \leq \exp\{\epsilon\} M(x^\prime, S) for all x \sim x^\prime and S \in \mathcal{S}.

Some DP Properties

Focusing on (pure) \epsilon-DP.

- Composition: If M_1 and M_2 are \epsilon-DP then

M_1 \otimes M_2 ~\text{is}~ 2\epsilon\text{-DP}

- Post-processing: If K is independent of the data then

M_1 K ~\text{is}~ \epsilon\text{-DP}

Some DP Properties

Focusing on \epsilon-DP. Examples:

- Composition:

If releasing the mean and variance of a dataset are each \epsilon-DP, then the joint release is 2\epsilon-DP.

- Post-processing:

If M constructs a histogram that is \epsilon-DP, then any quantile from the histogram will also be \epsilon-DP.

Variants of DP

There are many variants and relaxations of DP.

- Probabilistic DP (Guingona et al. 2023)

\inf_{x\sim x^\prime}\mathbb{P}_{x}\left[ m(x,T) \leq \exp\{\epsilon\} m(x^\prime, T) \right] \geq 1 - \delta

- Approximate DP (Dwork, Kenthapadi, et al. 2006)

M(x,S) \leq \exp\{\epsilon\} M(x^\prime, S) + \delta for all S \in \mathcal{S}.

Variants of DP

There are many variants and relaxations of DP.

- Rényi DP

- Gaussian DP

- Concentrated DP

- Many more!

Related to Lipschitz interpretation

Pure DP Semantics

- Hypothesis testing

- Type I & II error controlled by \epsilon (Wasserman and Zhou 2010)

- Bayesian evidence

- Prior-to-posterior ratio controlled (Dwork, McSherry, et al. 2006)

- Posterior-to-posterior ratio controlled (Kasiviswanathan and Smith 2014)

- Game theory

- Controls expected utility (Zhang et al. 2021)

Limitations of DP

- DP Semantics are post-hoc explanations

- Deterministic mechanisms are not covered by DP

- What about the mean of a large dataset?

Our approach is a semantics-first understanding of data privacy, where

- Privacy definitions are systematically constructed from an agent-based game

- Game assumptions have real-world interpretations

- Privacy easier to interpret, communicate and tailor

What else is in the paper?

- Probabilistic and pure DP special cases

- Rényi and f-divergence DP also by changing assumptions

- Privacy developed for deterministic mechanisms:

- empirical mean

- cell suppression

Privacy from the ground up or “semantics first”

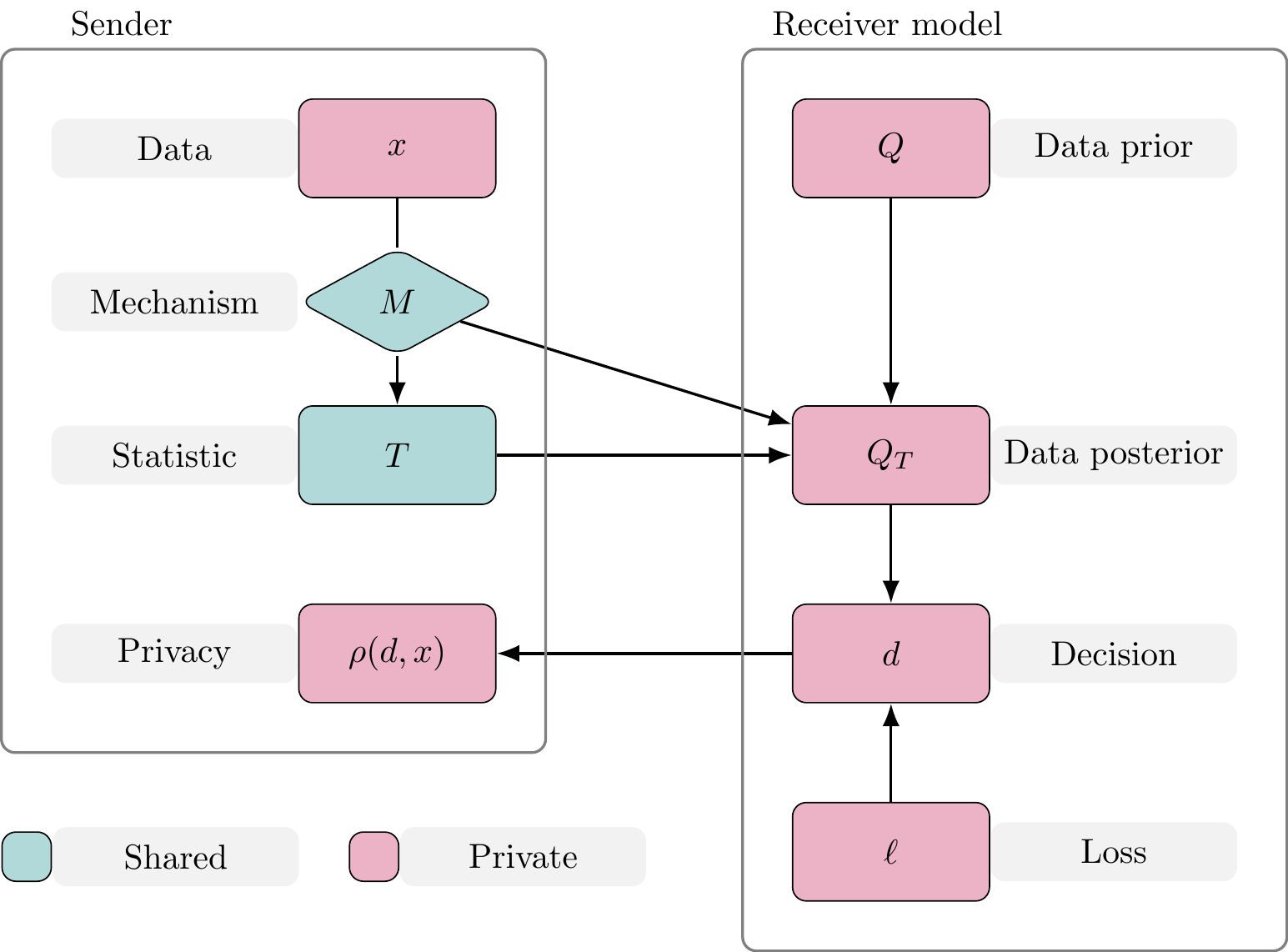

Overview

Sender

Government agency release small area statistics on a disease

Receiver

Insurance company raises premiums using inferred disease prevalence in a small town

Effect on data privacy

Statistic release \longrightarrow adversarial decisions \longrightarrow privacy effect

An anatomy of data privacy

The players

Sender

- Custodian of data

- Will use mechanism to release information about data

Receiver

- Makes decision based on revealed information

- Decision affects Sender’s privacy

Privacy function

Sender’s privacy measured with a privacy function

\rho:\mathcal{D} \times \mathsf{X} \rightarrow \mathbb{R}

Receiver’s decision \quad d_i \in \mathcal{D}

Data value \quad x \in \mathsf{X}

\rho orders preferences of decisions for a given dataset

- If d_1 is preferred to d_2 then \rho(d_1,x) > \rho(d_2,x)

Example 1: Privacy function

For some fixed \kappa>0,

\rho(d,x) = \begin{cases} 0, & \text{if } x \in d, \vert d \vert < \kappa\\ 1, & \text{otherwise}, \end{cases}

x \in \mathsf{X} = \mathbb{R}

d \in \mathcal{D} = \{[a,b]:a,b\in \mathbb{R}, a\leq b\}

Example 2: Privacy function

\rho(d,x) = -\log d(x)

x \in \mathsf{X} = \mathbb{R}

d \in \mathcal{D} = \{\text{probability density functions on } \mathbb{R}\}

The statistic

A statistic T \in \mathsf{T} is output from a mechanism M given x

T \sim M(x,\cdot)

- M:\mathcal{T} \times \mathsf{X} \rightarrow [0,1] for (\mathsf{T},\mathcal{T}) measurable space

The statistic

M can be deterministic or randomised

- Summary statistics \quad\tau:\mathsf{X}\rightarrow\mathsf{T}

- M(x,\cdot) = \delta_{\tau(x)}(\cdot)

- Noisy summary statistics

- M(x,\cdot) = \mathcal{N}(~\cdot \mid \tau(x), \sigma^2)

- Composition of multiple mechanisms

The statistic

M can be deterministic or randomised

- Result of optimisation

- MLE: \quad M(x,\cdot) = \delta_{\tau(x)}(\cdot)

- \tau(x) = \arg\max_{\theta \in \Theta} \log L(x \mid \theta)

- Monte Carlo output

- MCMC: \quad M(x,\mathrm{d}t_{1:N}) = \prod_{i=1}^N K(\mathrm{d}t_{i} \mid t_{i-1}, x)

- Noisy optimisation

- SGD: \quad M(x,\mathrm{d}t_N) = \int_{\mathsf{T}_{-n}}\prod_{i=1}^N K(\mathrm{d}t_{i} \mid t_{i-1}, x)

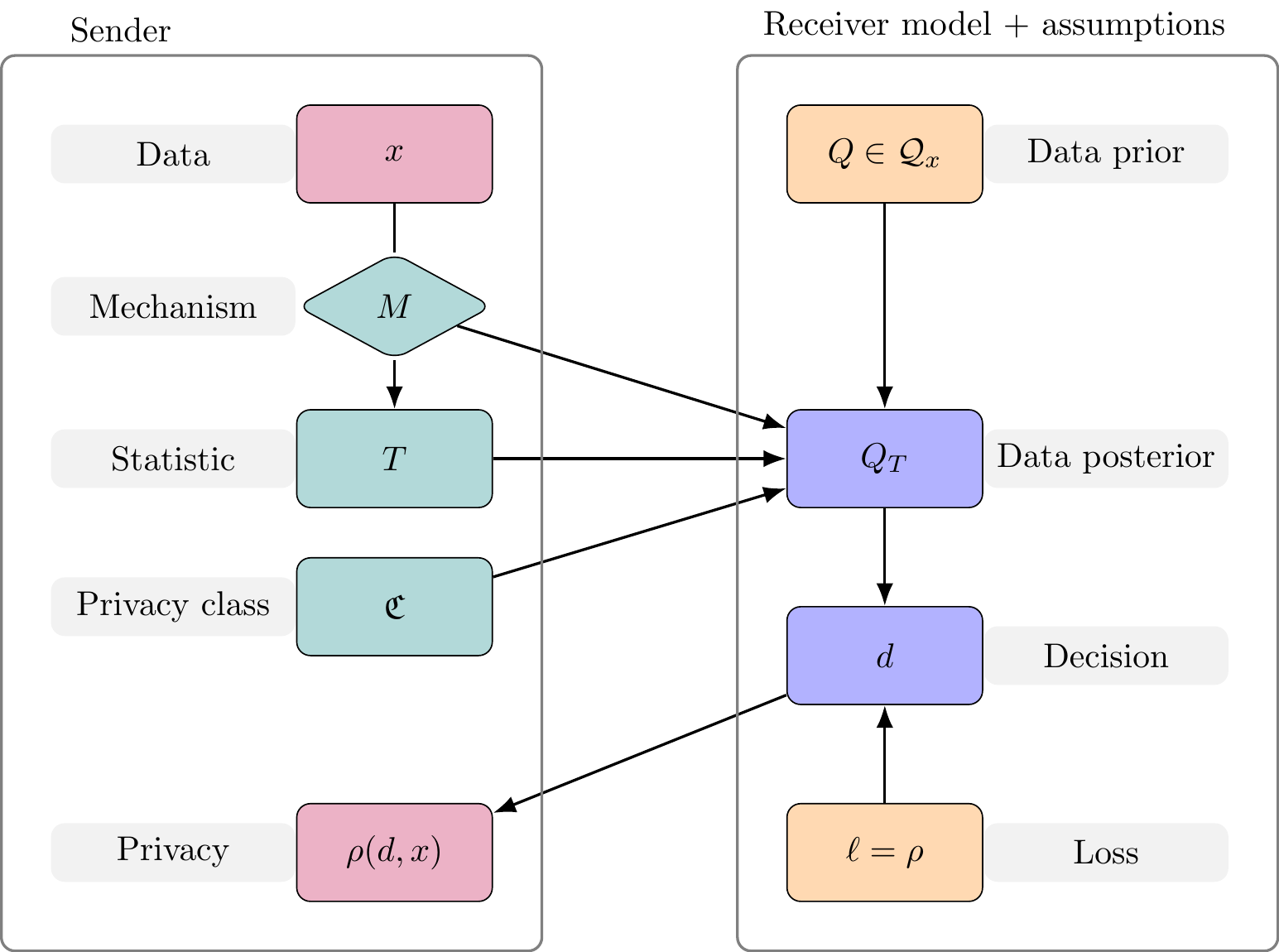

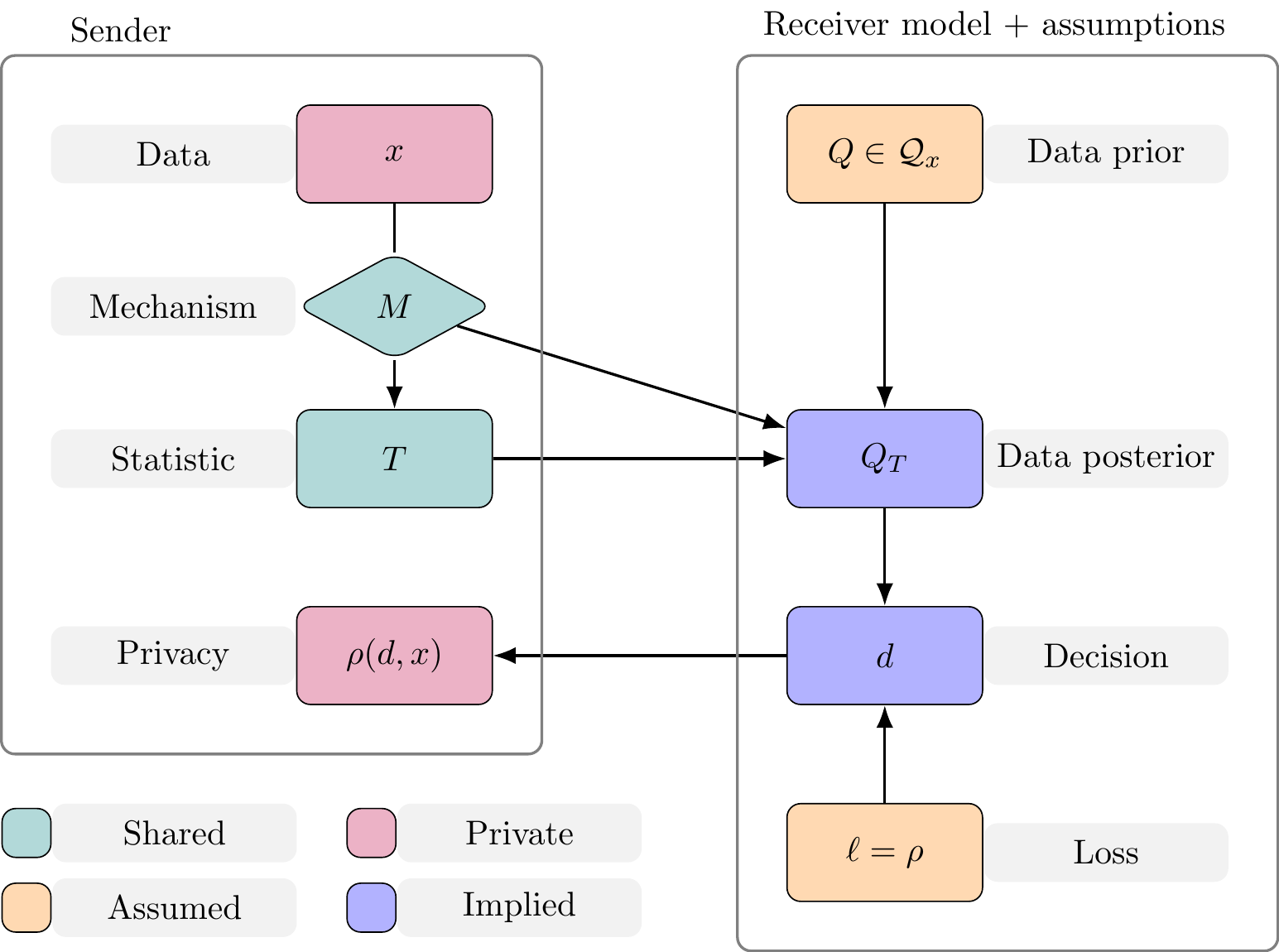

Transparency

Privacy class: A set of mechanisms that satisfy a given privacy definition.

If \mathfrak{C} is the privacy class generated by “\text{D}” then

M \in \mathfrak{C} \Longleftrightarrow M~\text{satisfies}~\text{D}

Assumption 1: Transparency

Sender shares the mechanism M and privacy class \mathfrak{C}, for which M \in \mathfrak{C}, with Receiver. Further, the definitions of M and \mathfrak{C} do not depend on the data.

Receiver

Assumption 2: Bayesian adversary

Receiver makes Bayesian decisions

- Holds a prior distribution over the data Q

- Constructs a posterior distribution Q_T from (M,T)

- Has loss function \ell: \mathcal{D} \times \mathsf{X} \rightarrow \mathbb{R}

\mathfrak{C} may affect Receiver’s data posterior. Here assumed not to.

An anatomy of data privacy

Receiver’s optimal decision

From Assumption 1:

Receiver’s optimal decision

d^{P} \in \arg\inf_{d \in \mathcal{D}} \mathbb{E}_{z \sim Q_T}[ \ell(d,z)]

To model Receiver’s decision:

We need assumptions on Q and \ell

Receiver model

Assumption 3: Adversarial loss function

Receiver has loss function \ell(d,x) = \rho(d,x)

- Interpretation 1: Receiver targets privacy

If d^P_{\ell^\prime} is Receiver’s optimal decision under (P,\ell^\prime) then

\mathbb{E}_{x \sim P}[ \rho(d^{P}_\rho,x)] \leq \mathbb{E}_{x \sim P}[ \rho(d^P_{\ell^\prime},x)]

- Interpretation 2: data-averaged worst-case for Sender

Receiver model

Assumption 4: Adversarial prior class

Let the data-prior Q \in \mathcal{Q}_x

Implies privacy outcome in terms of

Receiver’s decision

d^{Q_T} \in \arg\inf_{d \in \mathcal{D}} \mathbb{E}_{z \sim Q_T}[ \rho(d,z)]

Privacy outcome

\inf_{Q \in \mathcal{Q}_x} \rho(d^{Q_T},x)

An anatomy of data privacy

An anatomy of data privacy

Measuring privacy

Privacy outcome

\inf_{Q \in \mathcal{Q}_x} \rho(d^{Q_T},x)

- Let S: \mathcal{P} \times \mathsf{X} \rightarrow \mathbb{R} such that S(Q_T,x) = \rho(d^{Q_T},x)

- S is a (negatively-orientated) proper scoring rule1

- A “privacy score”

Measuring privacy

Privacy outcome

\inf_{Q \in \mathcal{Q}_x} S(Q_T,x)

- Use known proper scoring rules to measure privacy outcome

- Create new proper scoring rules from \rho

- For example when d is a PDF/PMF:

\rho(d,x) = -\log d(x) then S(Q_T,x)=-\log q_T(x).

Without loss of generality, we focus on proper scoring rules.

Measuring privacy

Privacy outcome

\inf_{Q \in \mathcal{Q}_x} S(Q_T,x)

- This is an absolute measure of privacy

- We will look at a relative measure of privacy

Measuring privacy

Relative privacy outcome

\inf_{Q \in \mathcal{Q}_x} \left[ S(Q_T,x) - S(Q,x) \right]

- Considers Receiver’s information gain

- Sender’s relative privacy change (worst-case)

Measuring privacy

Relative privacy outcome

\inf_{Q \in \mathcal{Q}_x} \left[ S(Q_T,x) - S(Q,x) \right]

But…

- Q_T may be random since T\sim M(x, \cdot)

Persuasive privacy

A privacy definition

Let \mathcal{Q}_x \subset \mathscr{P}(\mathsf{X},\mathcal{X})

M: \mathcal{T}\times \mathsf{X} \rightarrow \mathbb{R}_+ be a mechanism,

S be a privacy score, constants \kappa \geq 0, 0\leq\delta \ll 1.

Definition: Persuasive Privacy

We say M is (\mathcal{Q}_x, S, \kappa, \delta)-PP if

\inf_{x\in\mathsf{X}}\inf_{Q\in\mathcal{Q}_x}\mathbb{P}_x\left[S(Q, x) - S(Q_{T}, x) \leq \kappa \right] \geq 1 - \delta,

where \mathbb{P}_x is w.r.t. T \sim M(x,\cdot).

A privacy definition

Definition: Persuasive Privacy

We say M is (\mathcal{Q}_x, S, \kappa, \delta)-PP if

\inf_{x\in\mathsf{X}}\inf_{Q\in\mathcal{Q}_x}\mathbb{P}_x\left[S(Q, x) - S(Q_{T}, x) \leq \kappa \right] \geq 1 - \delta,

where \mathbb{P}_x is w.r.t. T \sim M(x,\cdot).

- Change in privacy: S(Q, x) - S(Q_{T}, x)

- Maximum allowable privacy change: \kappa

- Maximum probability of leakage: \delta\quad

Why “persuasive”?

Sender chooses a mechanism that persuades Receiver to make decisions limiting privacy loss..

Similar to Bayesian persuasion (Kamenica and Gentzkow 2011) However,

- asymmetry between Sender and Receiver

- utility functions of Sender and Receiver are related

- Sender assesses decisions (mechanism) robustly: worst-case not expected value

Properties of Persuasive Privacy

Composition

Receiver post-processing

Definition: Receiver Post-Processing

A guarantee \mathrm{D} satisfies the receiver post-processing property if M \in \mathfrak{C}(\mathrm{D}) implies that M\otimes K \in \mathfrak{C}(\mathrm{D}) for all Markov kernels K independent of the data x.

- Do not satisfy (Sender) post-processing

Returning to DP

Recall the Persuasive Privacy elements (\mathcal{Q}_x, S, \kappa, \delta), and consider

- Family of alternative hypothesis priors

\mathcal{H}_x = \{ Q \in \mathcal P_2:x^\prime \sim x, Q(\{ x , x^\prime \})= 1 \}

- Log-probability scoring rule

S(P,x) = -\log p(x)

Returning to DP

Alternative hypothesis prior class and log-probability score recover PrDP as instance of Persuasive Privacy

\inf_{x\sim x^\prime}\mathbb{P}_{x}\left[ m(x,T) \leq \exp\{\epsilon\} m(x^\prime, T) \right] \geq 1 - \delta

- Pure DP is a special case (\delta = 0)

- PrDP implies Approximate DP

Returning to DP

- Pure, Probabilistic, and approximate DP are notions of privacy relative to change in Receiver’s knowledge

- Can be derived by considering persuasive privacy game

- ADP satisfies (Sender) post-processing property

- PrDP does not (unless pure DP)

- Typically a criticism of PrDP

- PrDP does have (Receiver) post-processing property

- A simple fix: control Receiver’s decision by including pre-processed statistic

Summary

- Framework for purpose-driven privacy definitions (rigorously justified)

- All assumptions stated, anatomy used as backbone

- Assessment of existing privacy guarantees with game theory

- New interpretations of post-processing property

- Easy fix for PrDP post-processing

- Established that privacy guarantees possible for deterministic algorithms

Thank you!

Appendix

Privacy for deterministic mechanisms

- DP can’t be defined for deterministic mechanisms

- Persuasive privacy framework flexible enough

- Demonstrate privacy for mean \bar x

M(x,\cdot) = \delta_{\bar x}(\cdot)

Privacy for deterministic mechanisms

Use class of Gaussian distributions

\mathcal{G}_{x}^{r} = \left\{ \mathcal{N}(\mu,\Sigma): \frac{(\bar{x}-\bar{\mu})^2}{\overline{\Sigma}} \leq r_1 , c_\Phi \leq r_2 \left(1- \frac{\sigma_i^2}{\Vert\sigma \Vert_2^2} \right) \right\}

for r_1 > 0 and r_2 > 1.

- Data posterior after observing \bar x is multivariate normal but degenerate

- Support only on subspace \{z\in \mathbb{R}^n: \bar z = \bar x\}

Privacy for deterministic mechanisms

Use (marginal) Dawid–Sebastiani Score

D_i(Q,x) = \log \sigma^2_i(Q) + \frac{[x_i - \mu_i(Q)]^2}{\sigma^{2}_i(Q)}

for each element of data, and consider the worst-case element for privacy.

Privacy for deterministic mechanisms

Proposition

The average mechanism M(x,\cdot) = \delta_{\bar{x}} satisfies (\mathcal{I},\mathcal{G}_{x}^{r},r_1+ \log r_2,0)-PP.

- Possible to have a rigorous guarantee for a deterministic mechanism

- Opens path for privacy guarantees for convergent iterative algorithms

- e.g. MCMC without privacy perturbations (posterior summary statistics)

- A “decomposition rule”

Ongoing work

Short term

Decomposition rule (for deterministic mechanisms)

- Use noise in MCMC as additional privacy

Nonparametric prior families

Independent Commissioner Against Corruption (SA)

Medium term

Privacy-utility trade-off

Computation (SMC, SBI) versus privacy approximation trade-off